{{Short description|Cooling system used in data centers}}

{{Short description|Cooling system used in data centers}}

{{multiple issues|1=

{{multiple issues|1=

{{copy edit|date=August 2025|for=excessive upper case, general prose}}{{no footnotes|date=August 2025}}{{external links|date=August 2025}}

}}

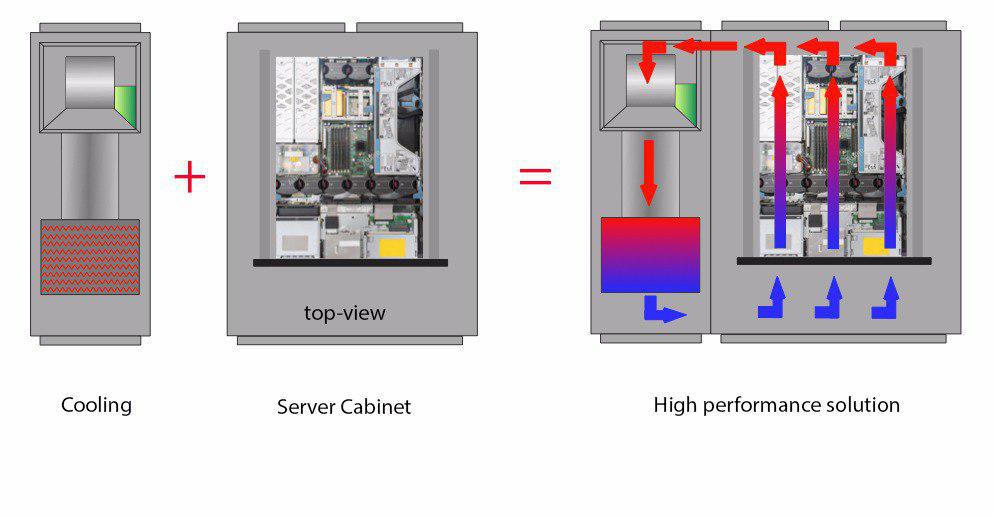

[[File:The proximity of the cooling system with the server cabinet allows a high-performance solution.jpg|thumb|The proximity of the cooling system with the server cabinet allows a high-performance solution]]

[[File:The proximity of the cooling system with the server cabinet allows a high-performance solution.jpg|thumb|The proximity of the cooling system with the server cabinet allows a high-performance solution]]

== Air Conditioner Types ==

== Air ==

Commercially available close coupled solutions can be divided into two categories: Open–Loop and Closed–Loop.

Commercially available close coupled solutions can be divided into two categories: – and -.

=== Open-Loop Configuration ===

=== Open- ===

Open-Loop configurations are not totally independent from the room they are installed, and air flows interact with the ambient room environment.

Open- configurations are not totally independent from the room they are installed, and air flows interact with the .

==== In-Row Air Conditioners ====

==== In- ====

Row-Based Air Conditioning units are installed inside the rack rows. Air flows generally follow short and linear paths, reducing, in this way, the necessary power needed to start up the fans and increasing the [[Energy efficiency (physics)|energy efficiency]].

Row- units are installed inside the rack rows. Air flows generally follow short and linear paths, reducing the necessary power needed to start up the fans increasing [[Energy efficiency (physics)|energy efficiency]].

A Row–Based cooling solution offers one advantage with respect to the Room-Based solution, since the former one can be better adapted to the needs of cooling for specific rows; it is anyway appropriate not to locate the conditioning units at the beginning or at the end of the rows to maximise their performance.

A – cooling solution the , since can be better adapted to the needs of cooling for specific rows.

==== Rear Door Heat Exchangers ====

==== Rear ====

This type of solution is based on the substitution of the rear door of an existing rack.

the rear door of an existing rack.

These [[heat exchanger]]s leverage the front-to-back air dissipation of most of the IT equipments: the [[Server (computing)|servers]] dissipate warm air, which passes the heat exchanger coil and is returned to the room at an agreeable temperature.

These [[heat exchanger]]s leverage the front-to-back of most of the IT equipments: the [[Server (computing)|servers]] dissipate warm air, which passes the heat exchanger coil and is returned to the room at an temperature.

The cooling units of this category do not occupy additional space, so they are particularly indicated either to cool all the spaces originally designed as data centers, or to integrate an already existing cooling system.

cooling units do not additional space, so they are particularly either to cool all the spaces originally designed as data centers, or integrate an already existing cooling system.

==== Overhead Heat Exchangers ====

==== Overhead ====

Generally, a heat exchanger of this type discharges air from the ceiling into the cold aisle whereas the exhaust air rises into the vents in the ceiling; in Close Coupled system cases, the units are positioned directly above the servers, making the cold air delivery and hot air return much more precise.

Generally, a heat exchanger air from the ceiling into the cold aisle air the ; in Close system cases, the units are positioned directly above the servers, making the cold air delivery and hot air return much more precise.

A system of this type, being positioned vertically, does not need further floor space in the room.

A system of this type, being positioned vertically, does not need floor space in the room.

=== Closed-Loop Configuration ===

=== Closed- ===

The Closed–Loop cooling typologies act independently from the room they are installed; the rack and the heat exchanger work exclusively with one another, creating an internal microclimate.

The – cooling act independently from the room they are installed; the rack and the heat exchanger work exclusively with one another, creating an internal microclimate.

==== In Rack Cooling ====

==== In ====

The cooling system is adjoint to the server rack and both of them are completely sealed; the solid doors on the enclosure and In–Row Air Conditioners contain the air flow, directing cold air to the server inlet and exhaust air, by using fans, through the cooling coil.

The cooling system is to the server rack and both of them are completely sealed; the solid doors on the enclosure and – contain the air flow, directing cold air to the server inlet and exhaust air through the cooling coil.

The Close–Loop design allows a very focused cooling at rack level and it is possible to install very dense equipment disregarding the ambient environment, giving flexibility to use unconventional spaces to install the IT equipment.

The – design allows a very focused cooling at rack level and it is possible to install very dense equipment disregarding the environment, flexibility to use unconventional spaces to install the equipment.

== Efficiency ==

== Efficiency ==

In the traditional layout, the fans must move air from the perimeter of the room, under the raised floor, and through a perforated floor tile into [[Server (computing)|servers]] intake. This process requires energy, that varies depending on the typology of the structure. Often, under the raised floor, holdbacks (large cable bundles, conduits) exist, requiring additional fan energy to move the required cold air volume.

In the traditional layout, the fans must move air from the perimeter of the room, under the raised floor, and through floor tile into [[Server (computing)|servers]] intake. This process requires energy, that varies depending on the of the . Often, under the raised floor, holdbacks (large cable bundles, conduits) exist, requiring additional fan energy to move the required cold air volume.

Being that in Close Coupled solutions the cooling system and the equipment rack are close to one another, the energy needed is reduced. With an In–Row typology the cooling unit is incorporated in the row of racks and, providing air directly to the row, there are not impediments to consider under the floor. It is estimated, that when integrated, a Close Coupled system can guarantee up to 95% of annual energy reduction compared to a traditional CRAC system of equal cooling capacity.

the cooling system and the equipment rack are close to one another, the energy needed is reduced. With an – typology the cooling unit is in the row of racks and, providing air directly to the row, there are not impediments to consider under the floor. It is estimated, that when , a system can guarantee up to 95% of annual energy reduction compared to a traditional CRAC system of equal cooling capacity.

Some cooling typologies can be associated to fans with variable velocity that adapt in better ways to the workload, so also to the internal temperature of the rack. Having fans that work at the minimum velocity satisfying the requirements of the [[Data center|Data Center]] is very important for energy consumption.

Some cooling typologies can be associated to fans variable velocity that adapt in better ways to the workload the internal temperature of the rack. Having fans that work at the minimum velocity satisfying the requirements of the [[Data center|Data Center]] is energy consumption.

It is verified that the percentage of energy saved, hence the total energy cost decreases in a more than proportional way with respect to the decrease of air flow (for example, by reducing the fan velocity by 10% we save 27% of energy).

It is verified that the percentage of energy saved in a more than proportional way with respect to the decrease of air flow (for example, by reducing the fan velocity by 10% we save 27% of energy).

{| class=”wikitable”

{| class=”wikitable”

|}

|}

Efficiency is also represented by modularity. With a Close Coupled solution in is indeed possible adding new conditioners in forecast of an increase of the Data Centre capacity.

=== Refrigerated Water Temperatures ===

=== Refrigerated Water Temperatures ===

In traditional systems, the refrigerated water supply temperatures typically vary between 6 and 7 °C and cold water is in fact necessary to generate cold air that compensates for the rise that occurs on the floor of the data center, since the cold inlet air and the hot exhaust air interact. However, it is necessary to ensure that the inlet temperature is between 18 and 26.5 °C as established by the [[ASHRAE]].

In traditional systems, the refrigerated water supply temperatures typically vary between 6 and 7°C and cold water is necessary to generate cold air, since the cold inlet air and the hot exhaust air interact. However, it is necessary to ensure that the inlet temperature is between 18 and 26.5°C as established by the [[ASHRAE]].

Some types of Close Coupled systems allow warmer water inlet temperatures due to the proximity of the refrigeration system and the design of the cooling coil while remaining within the guidelines of the ASHRAE.

Some types of systems allow warmer water inlet temperatures due to the proximity of the refrigeration system and the design of the cooling coil while remaining within the guidelines of the ASHRAE.

Since the refrigerators represent 30-40% of the energy consumption of a data center and that this is largely due to mechanical refrigeration, a higher water inlet temperature allows increase the hours in which [[free cooling]] is possible and therefore increase the efficiency of the refrigerator.

Since the refrigerators represent 30-40% of the energy consumption of a data center and that is mechanical refrigeration, a higher water inlet temperature allows increase the hours in which [[free cooling]] is possible therefore increase the efficiency of the refrigerator.

== Close Coupled system of Google Data Centers ==

== Close Coupled system of Google Data Centers ==

[[File:Data google 1.jpg|thumb|Google Data Center – Photo: [http://conniezhou.com/ Connie Zhou]]]

[[File:Data google 1.jpg|thumb|Google Data Center – Photo: [http://conniezhou.com/ Connie Zhou]]]

For several years now, [[Google]], according to the [http://www.datacenterknowledge.com/archives/2016/03/25/kava-google-redesigns-data-center-cooling-every-12-to-18-months statements] of the vice president of [https://www.google.com/about/datacenters/ Data Centers], [https://imasons.org/team/joe-kava/ Joseph Kava], restructures the cooling system of its Data Centers every 12 – 18 months, also focusing on Close Coupled type systems.

[[Google]], according to the [http://www.datacenterknowledge.com/archives/2016/03/25/kava-google-redesigns-data-center-cooling-every-12-to-18-months statements] of the vice president of [https://www.google.com/about/datacenters/ Data Centers], [https://imasons.org/team/joe-kava/ Joseph Kava], restructures the cooling system of its Data Centers every 12 – 18 months, also focusing on Close Coupled type systems.

In 2012 Google published a [https://www.google.com/about/datacenters/gallery/#/ photo gallery] that shows the design of its cooling system, followed by an explanation of Data Center Vice President Joseph Kava, of how it works.

In 2012 Google published a [https://www.google.com/about/datacenters/gallery/#/ photo gallery] that shows the design of its cooling system, followed by an explanation of Data Center Vice President Joseph Kava, of how it works.

In the [[data center]] shown the rooms serve as cold corridors, there is a raised floor but there are no perforated tiles. Cooling occurs in closed corridors with rows of racks on both sides while cooling coils using cold water serve as the ceiling of these warm corridors, which also house the pipes that carry the water to and from the cooling towers housed in another part of the building.

In the [[data center]] shown the rooms serve as cold corridors, there is a raised floor but there are no perforated tiles. Cooling occurs in closed corridors with rows of racks on both sides while cooling coils using cold water serve as the ceiling of these warm corridors, also house the pipes that carry the water to and from the cooling towers housed in another part of the building.

The temperature of the air is generally maintained around 26.5 °C, getting warmer more and more due to the contact with various components, up to about 49 °C. When air is directed by fans in the warm closed corridor where, reaching the top of the room passes through the cooling coil and is cooled to room temperature. The flexible piping connects to the cooling coil at the top of the hot aisle and descends through an opening in the floor and flows under the raised floor.

The temperature of the air is generally maintained around 26.5°C, warmer due to contact with various components. When air is directed by fans in the warm closed corridor where, reaching the top of the room passes through the cooling coil and is cooled to room temperature. The flexible piping connects to the cooling coil at the top of the hot aisle descends through an opening in the floor and flows under the raised floor.

From the statements of Kava: “If we had leaks in the conduits, the water would sink down into our raised floor. We have a lot of experience with this design, and it has never happened a large water loss”, the presence of an emergency system for any water leaks is confirmed. It is also confirmed that the proximity of liquids to the servers is not considered problematic.

From the statements of Kava: “If we had leaks in the conduits, the water would sink down into our raised floor. We have a lot of experience with this design, and it has never happened a large water loss”, the presence of an emergency system for any water leaks is confirmed. It is also confirmed that the proximity of liquids to the servers is not considered problematic.

Kava also stated, referring to other types of cooling systems with installations on the ceiling to return the hot exhaust air to the air conditioners of the computer room (CRAC) located along the perimeter of the raised floor area, that “The whole system is inefficient because the hot air is moved over a long distance while traveling towards the “CRAC”, while a Close Coupled system is significantly more efficient.”

Kava also stated, referring to other types of cooling systems with installations on the ceiling to return the hot exhaust air to the air conditioners of the computer room (CRAC) located along the perimeter of the raised floor area, that system is inefficient because the hot air is moved over a long distance while traveling towards the “CRAC”, while a system is significantly more efficient.”

== Bibliography ==

== Bibliography ==

Cooling system used in data centers

Close-coupled cooling is an advanced cooling system particularly used in data centers.[clarification needed] The goal of close coupled cooling is to bring heat transfer as close to the source of heat as possible. By moving the air conditioner closer to the equipment rack, it ensures a more immediate capture of exhaust air and that the cool air goes exactly where it is needed.

Air conditioner types

[edit]

Commercially available close coupled solutions can be divided into two categories: open-loop and closed-loop.

Open-loop configuration

[edit]

Open-loop configurations are not totally independent from the room they are installed, and air flows interact with the surrounding conditions of the room.

In-row air conditioners

[edit]

Row-based air conditioning units are installed inside the rack’s rows. Air flows generally follow short and linear paths, reducing the necessary power needed to start up the fans, therefore increasing energy efficiency.

A row-based cooling solution can be better than a single cooling system for the room, since it can be better adapted to the needs of cooling for specific rows.

Rear door heat exchangers

[edit]

A rear door heat exchanger type of solution substitutes the rear door of an existing rack with a heat exchange system.

These heat exchangers leverage the front-to-back pattern of energy loss present of most of the IT equipments: the servers dissipate warm air, which passes the heat exchanger coil and is returned to the room at an cooler temperature.

Because these cooling units do not take up additional space, so they are particularly suitable either to cool all the spaces originally designed as data centers, or as a way to integrate an already existing cooling system.

Overhead heat exchangers

[edit]

Generally, a heat exchanger takes air from the ceiling into the cold aisle, and air vents in the ceiling following air’s natural flow; in Close coupled system cases, the units are positioned directly above the servers, making the cold air delivery and hot air return much more precise.

A system of this type, being positioned vertically, does not need floor space in the room.

Closed-loop configuration

[edit]

The closed-loop cooling configurations act independently from the room they are installed; the rack and the heat exchanger work exclusively with one another, creating an internal microclimate.

The cooling system is next to the server rack and both of them are completely sealed; the solid doors on the enclosure and in-row air conditioners contain the air flow, directing cold air to the server inlet, and exhaust air through the cooling coil by using fans.

The close-loop design allows for a very focused cooling at rack level and it is possible to install very dense IT equipment disregarding the external environment, enabling flexibility to use unconventional spaces to install the equipment.

In the traditional layout, the fans must move air from the perimeter of the room, under the raised floor, and through holes in the floor tile into servers intake. This process requires energy, that varies depending on the structure of the server. Often, under the raised floor, holdbacks (large cable bundles, conduits) exist, requiring additional fan energy to move the required cold air volume.

Because the cooling system and the equipment rack are close to one another in close coupled solutions, the energy needed is reduced. With an in-row typology the cooling unit is integrated in the row of racks and, providing air directly to the row, there are not impediments to consider under the floor. It is estimated, that when implemented, a close coupled system can guarantee up to 95% of annual energy reduction compared to a traditional CRAC system of equal cooling capacity.

Some cooling typologies can be associated to fans that have a variable velocity that adapt in better ways to the workload and the internal temperature of the rack. Having fans that work at the minimum velocity satisfying the requirements of the Data Center is also helps reduce energy consumption.

It is verified that the percentage of energy saved increases in a more than proportional way with respect to the decrease of air flow (for example, by reducing the fan velocity by 10% we save 27% of energy).

| % FLOW | HOURS | ANNUAL ENERGY | ANNUAL COST | RPM | SAVINGS |

|---|---|---|---|---|---|

| 100 | 8760 | 49.774,43 | 1.742,10 | 2040 | 0% |

| 95 | 8760 | 42.774,64 | 1.493,64 | 1938 | 14.26% |

| 90 | 8760 | 36.285,56 | 1.269,99 | 1836 | 27.10% |

| 85 | 8760 | 30.567,72 | 1.069,87 | 1734 | 38.59% |

| 80 | 8760 | 25.484,51 | 891,96 | 1632 | 48.80% |

| 75 | 8760 | 20.998,59 | 734,95 | 1530 | 57.81% |

| 70 | 8760 | 17.072,63 | 697,01 | 1428 | 59.99% |

Despite an expected explosive growth of sales of close coupled solutions, more recent studies have shown a slower growth. The reason might to be due to the fact that in-row solutions provide significant energy savings only if racks meet 8-10 kW threshold; today’s average densities for medium-sized data centers are instead about 5 kW and energy savings do not fully justify the higher cost of investment for the cooling system.

Refrigerated Water Temperatures

[edit]

In traditional systems, the refrigerated water supply temperatures typically vary between 6 and 7 °C and cold water is necessary to generate cold air, since the cold inlet air and the hot exhaust air interact. However, it is necessary to ensure that the inlet temperature is between 18 and 26.5 °C as established by the ASHRAE.

Some types of close coupled systems allow warmer water inlet temperatures due to the proximity of the refrigeration system and the design of the cooling coil while remaining within the guidelines of the ASHRAE.

Since the refrigerators represent 30-40% of the energy consumption of a data center, and most of that energy consumption is because of mechanical refrigeration, a higher water inlet temperature allows increase the hours in which free cooling is possible, therefore increase the efficiency of the refrigerator.

Close Coupled system of Google Data Centers

[edit]

Google, according to the statements of the vice president of Data Centers, Joseph Kava, restructures the cooling system of its Data Centers every 12 – 18 months, also focusing on Close Coupled type systems.

In 2012 Google published a photo gallery that shows the design of its cooling system, followed by an explanation of Data Center Vice President Joseph Kava, of how it works.

In the data center shown the rooms serve as cold corridors, there is a raised floor but there are no perforated tiles. Cooling occurs in closed corridors with rows of racks on both sides while cooling coils using cold water serve as the ceiling of these warm corridors, and also house the pipes that carry the water to and from the cooling towers housed in another part of the building.

The temperature of the air is generally maintained around 26.5 °C, but steadily gets warmer, up to about 49 °C, due to contact with various components. When air is directed by fans in the warm closed corridor where, reaching the top of the room passes through the cooling coil and is cooled to room temperature. The flexible piping then connects to the cooling coil at the top of the hot aisle, descends through an opening in the floor, and flows under the raised floor.

From the statements of Kava: “If we had leaks in the conduits, the water would sink down into our raised floor. We have a lot of experience with this design, and it has never happened a large water loss”, the presence of an emergency system for any water leaks is confirmed. It is also confirmed that the proximity of liquids to the servers is not considered problematic.

Kava also stated, referring to other types of cooling systems with installations on the ceiling to return the hot exhaust air to the air conditioners of the computer room (CRAC) located along the perimeter of the raised floor area, that the “system is inefficient because the hot air is moved over a long distance while traveling towards the “CRAC”, while a close coupled system is significantly more efficient.”

- Bean, J., & Dunlap, K. (2008), Energy Efficient Data Centers: A Close-coupled Row Solution Archived 2024-08-31 at the Wayback Machine, ASHRAE Journal, 34–40.

- EPA. (2007, Agosto 2), EPA Report to Congress on Server and Data Center Energy Efficiency, da Energy Star.

- EYP Mission Critical Facilities. (2006, Luglio 26). Energy Intensive Buildings Trends and Solutions: Data Centers Archived 2017-06-11 at the Wayback Machine, da Critical Facilities Roundtable.

- Fontecchio, M. (2009, Gennaio 21), Data Center Air Conditioning Fans Blow Savings Your Way Archived 2009-09-10 at the Wayback Machine, da Search Data Center.

- Sun Microsystems (2008), Energy Efficient Data Centers: The Role of Modularity in Data Center Design, Sun Microsystems.